Hey there 👋

Glad to have you back.

Artificial intelligence is now everywhere. The term appears in product launches, marketing campaigns and everyday conversations about technology. Yet much of what happens behind the scenes remains poorly understood.

In 2025, a small controversy on YouTube highlighted this confusion.

The platform was quietly testing a feature designed to enhance video quality on Shorts. The system used algorithms to reduce blur and improve clarity during processing techniques similar to those already used in modern smartphone cameras.

But, the creators didn’t like it and quickly pushed back.

Many worried the platform was introducing AI slop into their content without consent, assuming the feature relied on generative AI to alter or recreate their videos.

YouTube responded publicly:

“No GenAI, no upscaling. We’re running an experiment on select YouTube Shorts that uses traditional machine learning technology to unblur, denoise, and improve clarity in videos during processing.”

The company emphasized that the experiment relied on conventional machine learning methods rather than generative AI.

For many observers, the explanation raised an obvious question.

“Aren’t those the same thing?”

They are not.

And that confusion points to a larger problem in how artificial intelligence is discussed today. Terms like machine learning, deep learning, generative AI, and even broader ideas such as AGI and ANI rarely make it into public conversation. Instead, the label “AI” is slapped on top of them. The result is a blurred understanding of what these systems actually are and how they work.

This article focuses on clearing up that confusion.

The Foundation: What Machine Learning Actually is

Machine learning (ML) is a branch of artificial intelligence focused on building systems that learn from data rather than following fixed instructions. Instead of programming every rule manually, developers train algorithms on datasets so the system can recognize patterns and make decisions on its own.

In other words, machine learning allows computers to classify information, detect patterns, and make predictions based on what they have already seen.

Take phishing emails for example - it might occasionally slip into your inbox, but the system quickly learns from patterns shared across similar messages and as more data is processed, the filter improves its ability to recognize and block those messages automatically.

The concept itself is not new. Researchers have been exploring machine learning since the 1950s, long before the current wave of AI hype. Today, many everyday systems rely on it, including spam filters, recommendation engines, tools that upscale video quality and much more.

Most of these systems do not generate new content. Their role is to analyze existing data and make informed decisions based on patterns within it.

Self-driving vehicles are another great example. These systems process enormous amounts of sensor and camera data to recognize road signs and navigate traffic. The model improves by learning from previous driving data and continuously refining how it responds to real-world situations.

In many ways, machine learning forms the technical foundation beneath much of what we now describe as AI.

Machine learning itself comes in several forms. While the field is broad, most applications fall into three major categories.

Supervised Machine Learning

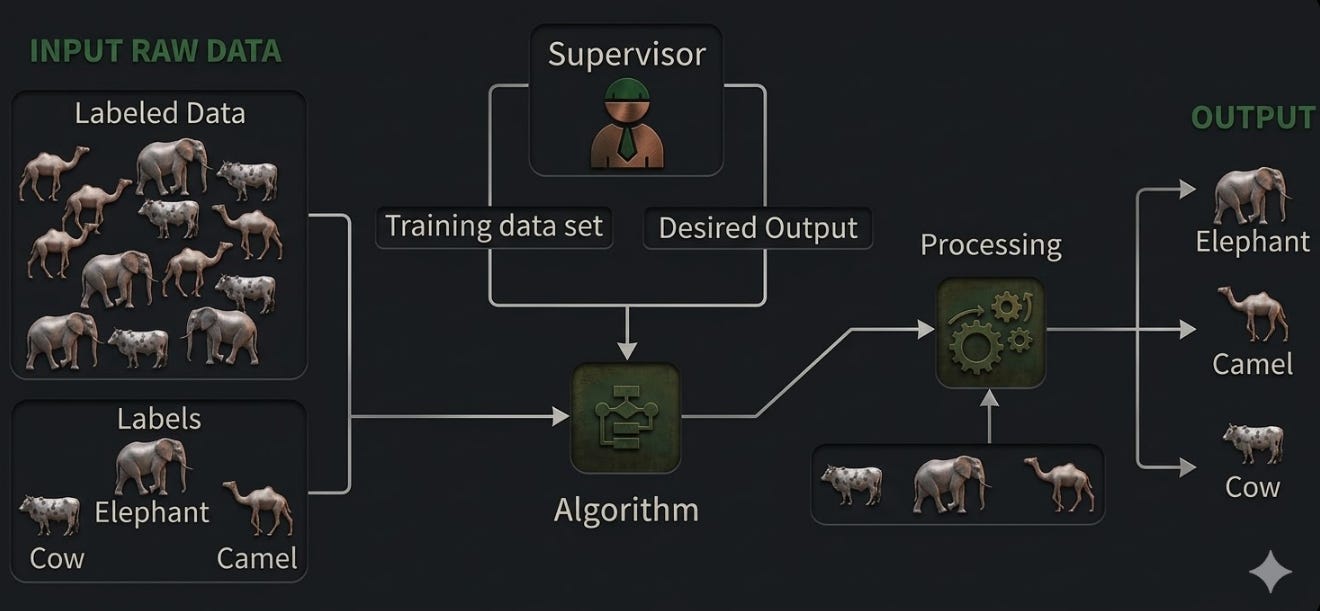

Supervised learning involves training a model using labeled data, where both the inputs and the correct outputs are already known. The algorithm learns how to map inputs to the correct results by studying these examples.

For instance, a model can be trained using labeled images of animals. Each image already includes the correct label. After training on enough examples, the system learns the visual features associated with each category. When it encounters a new image, it can predict which animal it contains.

Unsupervised Machine Learning

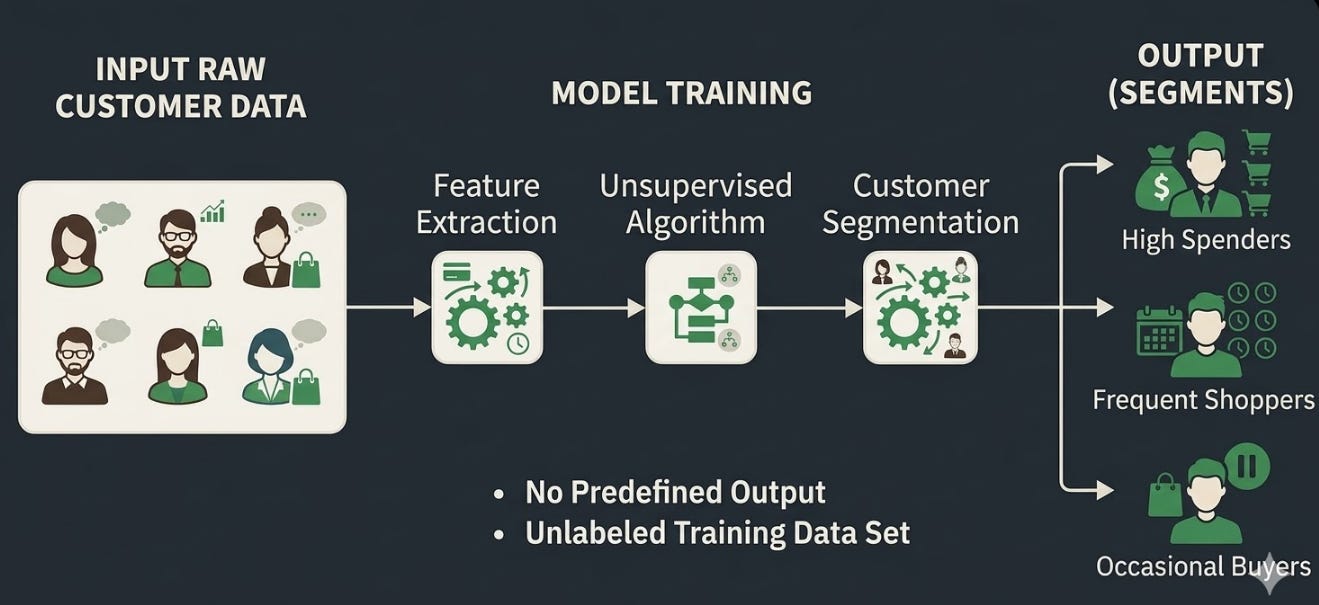

Unsupervised learning works differently. Instead of labeled data, the model receives raw information without predefined answers and must identify patterns on its own.

A common example appears in customer data analysis. If a company has large datasets about purchasing behavior but no predefined categories, an unsupervised model can group customers with similar habits. These clusters can then help businesses understand more about each customer and then develop marketing strategies accordingly.

Reinforcement Learning

Reinforcement learning trains systems through trial and error. Instead of labeled examples, the model interacts with an environment and receives feedback in the form of rewards or penalties.

Consider an AI system learning to play chess. When it makes strong moves, it receives positive feedback and vice versa. Over time, by experimenting with different strategies and observing the results, the system learns how to make increasingly effective decisions.

Where the Confusion Begins: Traditional ML vs Generative AI

This distinction is where the YouTube controversy started to get messy. People assumed the feature involved generative AI, when the technology behind it was much closer to the machine learning systems that have existed for decades.

Traditional Machine Learning

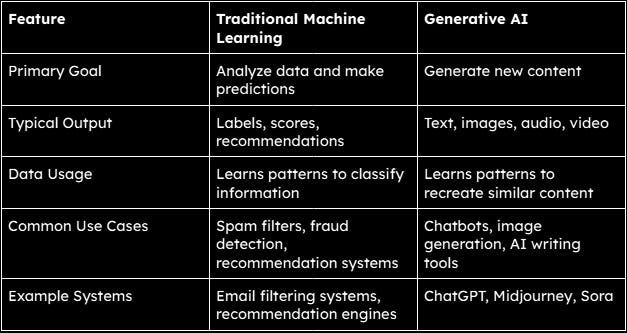

Earlier we discussed machine learning as the broader concept and within it, traditional machine learning handles the “analyzing data and making predictions part” which I previously explained in detail.

Just keep this in mind - traditional ML works with existing data and tries to determine what something is or what might happen next and it does NOT generate new material.

Generative AI

Generative AI follows a different approach.

Instead of focusing only on prediction or classification, generative models are trained to produce new outputs that resemble the data they were trained on. The model studies patterns across massive datasets and learns how to recreate similar structures.

This ability allows generative systems to produce things like written text, digital images, music, computer code, or even video.

Tools such as ChatGPT generate natural-language responses. Image generators like Midjourney produce artwork from simple prompts, while emerging video models such as Sora can create entire scenes from text descriptions.

Deep Learning and the Architecture Behind Modern AI

Beyond machine learning and generative AI, two technical ideas power much of the AI people interact with today - deep learning and transformer models.

These systems are rarely discussed outside technical circles, yet they sit at the core of many modern AI advancements. Together, these technologies power many of the systems that define modern AI.

Understanding Deep Learning

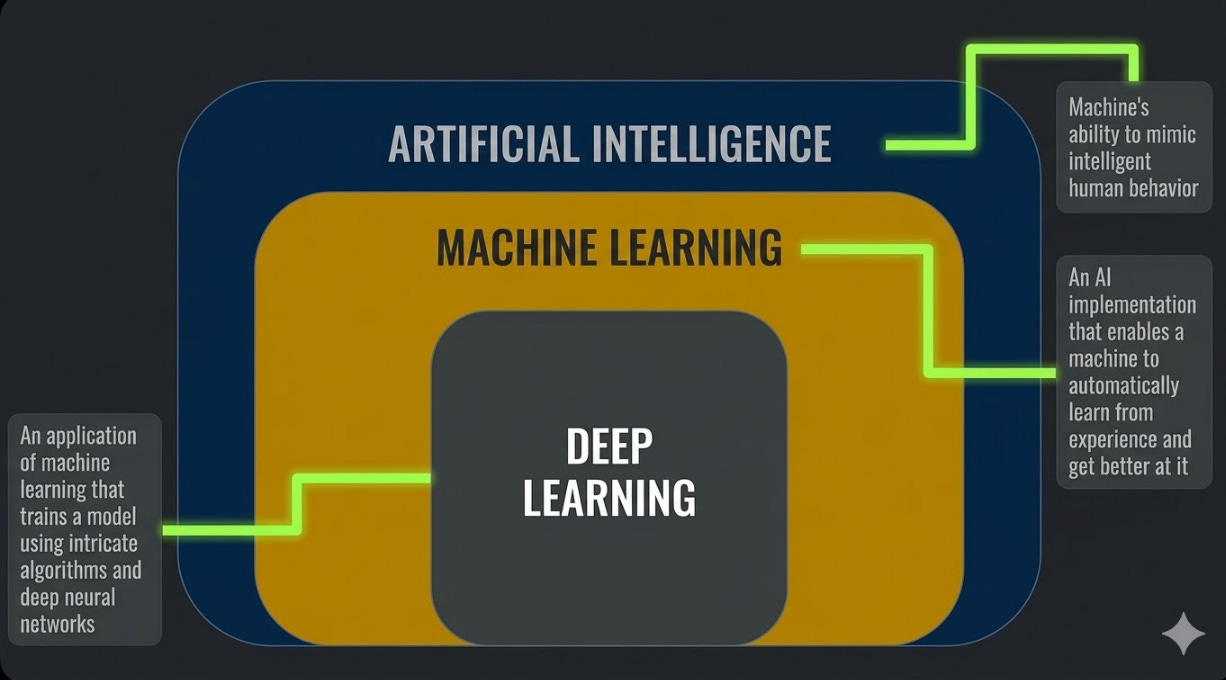

Deep learning is a branch of machine learning that uses artificial neural networks with many layers to analyze data.

The relationship between these fields is often easiest to picture as a hierarchy. Artificial intelligence sits at the top, machine learning forms a major subfield within it, and deep learning represents a more specialized area inside machine learning.

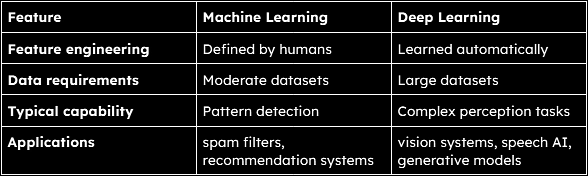

The key difference between traditional machine learning and deep learning lies in how systems learn patterns.

Traditional ML systems often rely on human engineers to define the features a model should examine. For example, if a model needs to identify stop signs, programmers may specify characteristics such as shape, color, or edge patterns.

Deep learning models reduce this manual work. By analyzing large datasets, neural networks can learn those characteristics automatically. Instead of being told what features matter, the system discovers them during training.

This ability to learn features automatically allows deep learning models to handle far more complex tasks. Today they power technologies ranging from computer vision systems and speech recognition to robotics and self-driving vehicles.

Understanding Transformer Models

In 2017, researchers introduced a new neural network architecture called the transformer. This design quickly became the foundation for many of today’s most powerful AI systems.

Transformers are built to process sequences of information, such as sentences, lines of code, audio signals, or even frames in a video. Their key innovation is a mechanism known as attention, which allows the model to evaluate how different parts of an input relate to each other.

Instead of reading data strictly in order, transformers can analyze relationships between all elements at once. This helps the model understand context far more effectively.

Although transformers are often associated with text generation, the architecture is not limited to language. Variants such as Vision Transformers (ViT) are used in computer vision, while similar approaches power multimodal models that combine text, images, and audio.

Many modern AI systems rely on this architecture. Models such as ChatGPT and Claude are built on transformer-based designs, typically using encoder–decoder or decoder-only variants.

The Three Levels of AI

When people talk about the future of AI, they usually refer to three stages: narrow intelligence, general intelligence, and superintelligence.

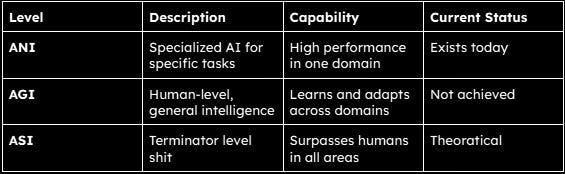

Artificial Narrow Intelligence (ANI)

Artificial Narrow Intelligence is the only form of AI currently in real-world use. The word narrow simply means specialization. These systems are built to perform specific tasks extremely well. They operate within defined boundaries and do not extend beyond them.

Everyday technologies fall into this category. Voice assistants respond to commands, recommendation systems analyze user behavior, and tools like ChatGPT generate text or code. Each system performs its function with somewhat impressive accuracy, but none of them truly understand the world beyond their training.

And of course, the limitations exist, for example - A system trained to recommend movies cannot suddenly start driving a car or managing finances.

Artificial General Intelligence (AGI)

Artificial General Intelligence represents a system that can think, learn, and adapt across multiple domains, much like a human.

Unlike ANI, an AGI system would not be limited to a single task. Theoretically, it could possibly learn new skills, apply knowledge across different fields, and solve unfamiliar problems without retraining.

Despite growing public discussion, AGI has not been achieved. Headlines suggesting systems becoming conscious or achieving human-level intelligence remain speculative and often driven by hype rather than technical reality.

Artificial Superintelligence (ASI)

Artificial Superintelligence goes a step further. It describes a system that surpasses human intelligence, reasoning, creativity, decision-making, and even social understanding.

In theory, an ASI system would be able to operate at a scale far beyond human thinking.

The idea often appears in science fiction because of its extreme implications. A system capable of compressing decades of scientific progress into seconds would fundamentally reshape every field it touches.

It’s important to note that - at present, ASI remains entirely theoretical. Even reaching AGI is still an unsolved challenge, which places superintelligence far beyond current capabilities.

Why Everything is Suddenly AI

“If most systems are just machine learning, why does everything get labeled AI?”

It’s because of moneyyy!

A useful comparison comes from the term greenwashing, where companies exaggerate how environmentally friendly a product is to appeal to consumers. A similar pattern is now visible in tech, often referred to as AI washing - the practice of overstating products as “AI-powered” to make them appear more advanced than they actually are.

The thing is - investors associate AI with “future growth”, consumers view AI features as '“cutting-edge”, and companies feel pressure to keep up with competitors adopting the label. Calling something “AI” signals “innovation”, even when the underlying system is little more than standard automation or basic machine learning.

As a result, the definition of AI has been modified a lot. Tools that would have once been described as algorithms or analytics systems are now rebranded under the AI umbrella.

There have already been public examples of this trend. A campaign by Coca-Cola drew criticism after claiming a new drink had been co-created with AI, without clearly explaining what role the technology actually played. Reporting by Forbes highlighted how the claim appeared to rely more on branding than substance.

Regulators are also beginning to respond. In 2024, the U.S. Securities and Exchange Commission charged two firms with making misleading statements about how extensively they used AI in their investment strategies.

The point is - AI has become a marketing label and like any label tied to hype, it gets stretched.

The Rise of Agentic AI

While much of the confusion around AI comes from marketing, the technology itself is still evolving. One of the latest developments is what many now call agentic AI.

Unlike traditional systems that wait for prompts, these models are designed to take action. They can coordinate and interact with different software tools to complete an objective:

Generative AI models, such as tools like ChatGPT or image generators like Midjourney, focus on producing content.

AI agents go a step further by using that intelligence to perform specific tasks, such as scheduling actions, retrieving data, or executing workflows across applications.

Agentic AI extends this idea even further. It involves multiple agents working together, coordinating tasks and decisions with minimal human input.

Projects like OpenClaw highlight how multiple agents can coordinate to handle complex tasks. However, this space is still in its early stages. These systems often struggle with reliability and introduce serious security risks when given access to external environments.

Despite these limitations, progress is being made. Agentic systems are likely to improve and expand, making them an area worth watching closely.

Why Understanding This Matters

At a glance, the distinctions between different types of AI models and technologies can seem academic. In reality, they directly affect how AI systems behave.

A recommendation engine predicts preferences. A generative model produces content that may or may not be accurate. An agentic system can take action, which introduces a different level of risk and responsibility.

Knowing what kind of system you are dealing with, helps in setting expectations and answering questions like - can it make decisions on its own? Can it generate misleading outputs? Should its actions be verified?

It also helps filter out hype.

We also have terms like “singularity” - the idea that AI could surpass human intelligence and rapidly evolve beyond control - which are often used to create urgency and attention. In reality, current systems remain far from that scenario.

Understanding the layers of AI makes one thing clear - the technology is advancing, but it is still bounded. And recognizing those boundaries is what keeps expectations grounded.

What Comes Next

Much of today’s AI conversation revolves around how current systems are rapidly approaching Artificial General Intelligence (AGI).

Despite their capabilities, modern AI systems still struggle with core problems such as reasoning, long-term planning, and understanding cause & effect. They can generate convincing outputs, but that doesn’t mean they truly understand what they produce.

These limitations matter. They suggest that simply scaling current models may not automatically lead to human-level intelligence. And this doesn’t mean progress has stalled. It means expectations need to be calibrated.

In short, AI is not a single system or breakthrough. It is an umbrella term that covers a range of technologies built on top of each other - from machine learning to deep learning, from transformer architectures to generative models.

Each layer adds capability, but not all of them represent the same level of innovation.

Some, like generative models, have introduced visible and impactful changes in how people interact with technology. Others are slow improvements that refine existing systems rather than redefine them.

With that being said, thanks for reading.

Peace out.

One Last Thing

Nearly all people interact with systems without questioning them. This space is about doing the opposite aka understanding how things work, where they fail, and what that means in practice.

If that’s the kind of content you want more of, subscribe. And if this piece added something new to how you see things, pass it on. Good information only matters if it travels.

And if you’re active elsewhere, follow along on Bluesky or X (Twitter) or LinkedIn - those are the two places I show up most consistently outside Substack. A follow, a like, a repost - it matters to me, more than you think.

Thank you for writing so clearly about the subject a lot of people are scared of and very few people understand. The AI hype is is driving mass layoffs and is propping up the stock market in the United States, and we will see a lot more disruption before everyone realizes that the AGI is an experience pipe dream, and that we control the power switch.

I always had this question, that why is everything called AI these days, thanks for clearing it all up, really goodd post.